enthalpy

Why do quasicrystals exist?

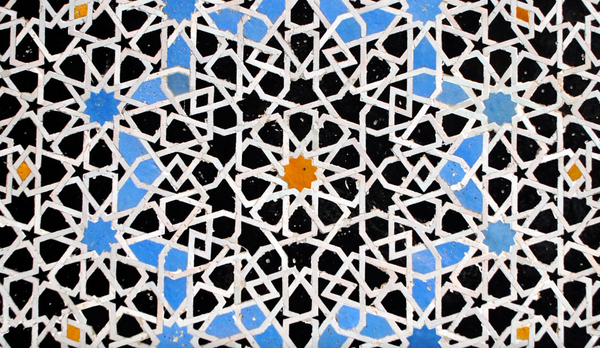

'Quasi' means almost. It's an unfair name for quasicrystals. These crystals exist in their own right. Their name comes from the internal arrangement of their atoms. A crystal is made up of a repeating group of some atoms arranged in a fixed way. The smallest arrangement